Claude 3.7 vs GPT-4o vs Gemini 2.5 Pro 2026: Which Model Wins?

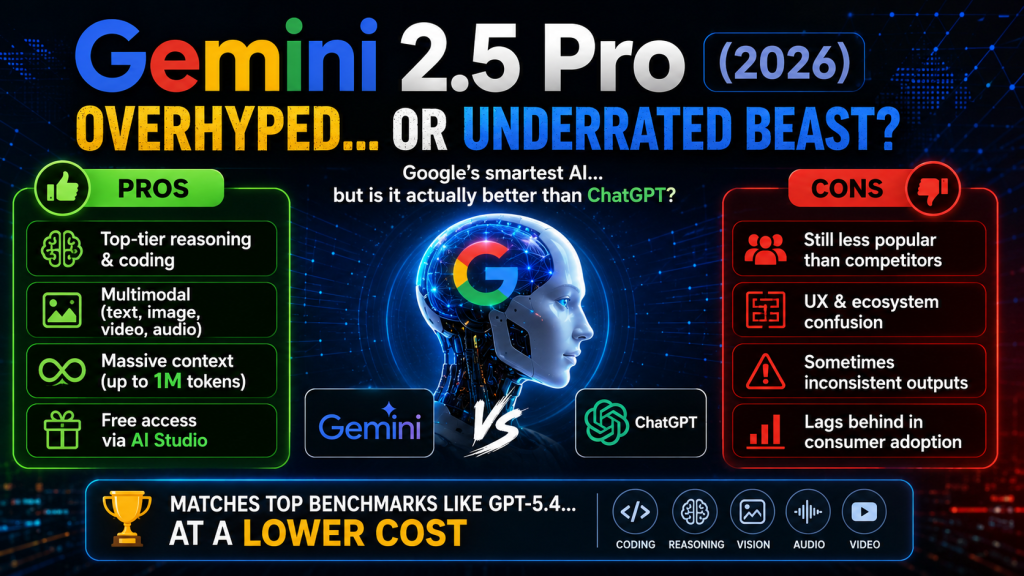

Claude 3.7 vs GPT-4o vs Gemini 2.5 Pro in 2026 — three elite AI models, each with distinct strengths. Claude 3.7 Sonnet leads on reasoning, coding, and instruction-following. GPT-4o wins on multimodal tasks and the broadest ecosystem. Gemini 2.5 Pro dominates on context length (1M tokens) and native Google integration. Here’s the complete, no-hype comparison.

Model Overview: Claude 3.7 vs GPT-4o vs Gemini 2.5 Pro

| Spec | Claude 3.7 Sonnet | GPT-4o | Gemini 2.5 Pro |

|---|---|---|---|

| Creator | Anthropic | OpenAI | Google DeepMind |

| Context window | 200,000 tokens | 128,000 tokens | 1,000,000 tokens |

| Extended thinking | Yes (hybrid mode) | Yes (o-series models) | Yes (thinking mode) |

| Multimodal inputs | Text + Images | Text + Images + Audio | Text + Images + Video + Audio |

| Real-time web access | No (base model) | Yes (ChatGPT) | Yes (Gemini) |

| API pricing (input) | $3/M tokens | $5/M tokens | $3.50/M tokens |

| API pricing (output) | $15/M tokens | $15/M tokens | $10.50/M tokens |

| Speed (tokens/sec) | ~80 | ~110 | ~90 |

| Consumer price | $20/month (Claude Pro) | $20/month (ChatGPT Plus) | $19.99/month (Gemini Advanced) |

Benchmark Results 2026: Who Scores Highest?

| Benchmark | Claude 3.7 Sonnet | GPT-4o | Gemini 2.5 Pro | Winner |

|---|---|---|---|---|

| MMLU (knowledge) | 91.2% | 88.7% | 91.8% | Gemini |

| HumanEval (coding) | 93.7% | 90.2% | 92.1% | Claude |

| SWE-bench (real bugs) | 70.3% | 63.8% | 68.9% | Claude |

| MATH (math reasoning) | 96.2% | 93.5% | 97.1% | Gemini |

| GPQA (PhD-level Q&A) | 84.1% | 78.9% | 83.7% | Claude |

| LMSYS Arena (human pref.) | #2 | #3 | #1 | Gemini |

| LongContext (1M tokens) | N/A (200K limit) | N/A (128K limit) | Excellent | Gemini |

Real-World Test 1: Code a Full Feature

We asked all three to build a Python FastAPI endpoint with JWT authentication, rate limiting, and database connection. Results:

- Claude 3.7 Sonnet: Produced complete, production-ready code with proper error handling, type hints, docstrings, and security best practices. First attempt was deployable. ⭐⭐⭐⭐⭐

- GPT-4o: Working code but missing rate limiting implementation. Required one follow-up prompt to complete. ⭐⭐⭐⭐

- Gemini 2.5 Pro: Working code with some non-standard patterns. More verbose than necessary. ⭐⭐⭐⭐

Coding winner: Claude 3.7 Sonnet

Real-World Test 2: Write a 1,000-Word Blog Post

Asked to write an engaging introduction for an article on AI automation for small businesses:

- GPT-4o: Most engaging, conversational, and varied prose. Natural hooks, strong voice. Best for marketing copy. ⭐⭐⭐⭐⭐

- Claude 3.7 Sonnet: Excellent quality, more structured. Slightly more formal but very clear. ⭐⭐⭐⭐⭐

- Gemini 2.5 Pro: Good quality but occasionally encyclopedic in tone. Works well for informational content. ⭐⭐⭐⭐

Writing winner: GPT-4o (barely) — both Claude and GPT-4o are excellent

Real-World Test 3: Analyze a 200-Page Document

Uploaded a 150,000-token technical report and asked for a 5-point executive summary with specific data extraction:

- Gemini 2.5 Pro: Processed the entire document in one shot. Accurate, comprehensive summary with specific page references. ⭐⭐⭐⭐⭐

- Claude 3.7 Sonnet: Handled up to 200K tokens well. Accurate summary but required chunking for very long documents. ⭐⭐⭐⭐

- GPT-4o: 128K context limit required splitting the document. Struggled with cross-document synthesis. ⭐⭐⭐

Long document winner: Gemini 2.5 Pro by a wide margin

Which Model Should You Use in 2026?

| Task | Best model |

|---|---|

| Coding, debugging, code review | Claude 3.7 Sonnet |

| Creative writing, marketing copy | GPT-4o |

| Long document analysis (>100K tokens) | Gemini 2.5 Pro |

| Complex reasoning, research | Claude 3.7 Sonnet |

| Video and audio understanding | Gemini 2.5 Pro |

| Quick general questions | GPT-4o (fastest) |

| AI agents and tool use | Claude 3.7 Sonnet |

| Google Workspace automation | Gemini 2.5 Pro |

| API cost efficiency | Claude 3.7 (input) / Gemini (output) |

The Smart 2026 Stack: Use All Three

Most power users and developers in 2026 don’t pick one model — they route tasks to the right model. A practical setup:

- Claude 3.7 Sonnet as your primary coding and reasoning model (via Cursor AI or API)

- GPT-4o for content creation, customer-facing copy, and general conversation (ChatGPT Plus)

- Gemini 2.5 Pro for document processing, research, and Google Workspace tasks (Gemini Advanced)

At $20/month each, $60/month total gives you access to three elite AI models covering every professional use case.

FAQ — Claude 3.7 vs GPT-4o vs Gemini 2.5 2026

Is Claude 3.7 better than GPT-4o?

For coding and complex reasoning — yes. For creative writing and the ChatGPT ecosystem (plugins, memory, voice) — GPT-4o remains highly competitive. They are effectively tied for general use.

Is Gemini 2.5 Pro the best AI in 2026?

On benchmarks, Gemini 2.5 Pro leads several categories. In real-world use, the best model depends on your specific task — Gemini is unmatched for long context and video, but Claude and GPT-4o outperform it on writing and coding.

Which is cheapest for API use?

For input tokens, Claude 3.7 ($3/M) and Gemini ($3.50/M) are cheaper than GPT-4o ($5/M). For output, Gemini ($10.50/M) beats both Claude ($15/M) and GPT-4o ($15/M).

Can I use all three for free?

Yes — Claude.ai, ChatGPT, and Gemini all have free tiers. Free Claude gives access to Claude 3.5 Sonnet. Free ChatGPT gives limited GPT-4o access. Free Gemini gives access to Gemini 2.5 Pro via Google AI Studio.

What is Claude 3.7’s extended thinking mode?

Claude 3.7’s hybrid reasoning mode allows it to “think” through complex problems step-by-step before responding — similar to OpenAI’s o1/o3 models. This significantly improves performance on math, science, and complex coding tasks at the cost of higher latency and token usage.